In the modern world of software development with Docker, the "core" of every Docker image is the Dockerfile—a blueprint that precisely defines how the image is built. However, creating a Dockerfile that "just works" is only the beginning. To truly harness Docker's power, we need to dive into advanced Dockerfile optimization techniques.

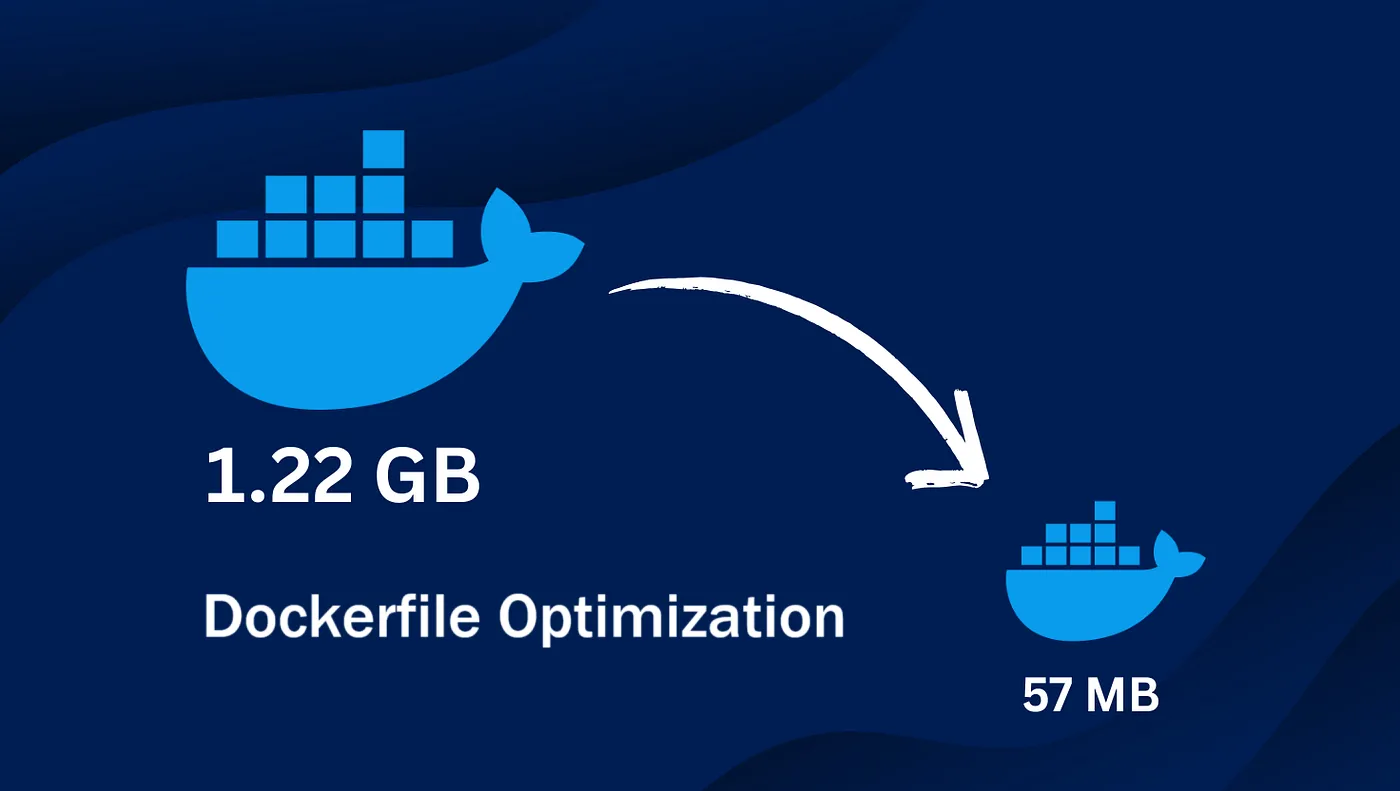

An optimized Docker image not only significantly reduces size, saving storage costs and speeding up deployments, but also enhances security by removing unnecessary components and accelerates build times, boosting your team's productivity.

This article will guide you through Dockerfile optimization techniques from basic to advanced, helping you create images that are "ultra-thin, super-fast, and highly secure".

1. Reduce Docker Image Size: "Less is More"

Image size is a top priority. The smaller the image, the faster it is to pull/push to registries, the shorter the container startup time, and the smaller the attack surface.

Use Minimal Base Images

Instead of using full-featured base images like ubuntu or centos, prefer minimal versions designed specifically for containers.

alpine: A super lightweight Linux distribution (~5MB). It's a top choice for size optimization. However, it usesmusl libcinstead of the more commonglibc, which may cause compatibility issues for some apps.distroless: Developed by Google, distroless images contain only your app and its runtime dependencies—no package manager, shell, or other utilities. This greatly reduces the attack surface.slim: Many popular images (likepython,node) offerslimtags, which strip out unnecessary packages compared to the default version.

Example:

# LESS OPTIMAL

FROM ubuntu:22.04

...

# OPTIMAL

FROM python:3.11-slim

...

# EVEN MORE OPTIMAL (if compatible)

FROM gcr.io/distroless/python3-debian11

...

Leverage Multi-Stage Builds

This is the most powerful technique for reducing image size for apps that require compilation (like Go, Java, C++, or frontend apps that build JavaScript/CSS). The idea is to split the build into multiple stages:

- Build Stage: Use a larger image with all SDKs, compilers, and tools needed to build your app.

- Final Stage: Use a minimal base image and only copy the built binaries or assets from the previous stage.

Example for a Go app:

# --- Stage 1: Build ---

FROM golang:1.20-alpine AS builder

WORKDIR /app

COPY . .

# Build the app, producing a static binary

RUN go build -o my-app .

# --- Stage 2: Final ---

FROM alpine:latest

WORKDIR /root/

# Only copy the built binary from the 'builder' stage

COPY --from=builder /app/my-app .

# Run the app

CMD ["./my-app"]

The result: the final image contains only the alpine base and a single binary, with the Go SDK and source code completely removed.

Clean Up After Each RUN Command

Each command in a Dockerfile creates a new layer. If you install packages and then delete cache in a separate command, the cache still exists in the previous layer, increasing image size. Combine commands and clean up in the same layer.

Example:

# LESS OPTIMAL - Cache remains in the first layer

RUN apt-get update

RUN apt-get install -y curl

RUN rm -rf /var/lib/apt/lists/*

# OPTIMAL - Install and clean up in one layer

RUN apt-get update && \

apt-get install -y curl && \

rm -rf /var/lib/apt/lists/*

2. Speed Up Builds: Time is Money

Build speed directly impacts development and CI/CD cycles. Leveraging Docker's cache mechanism is key to reducing wait times.

Order Instructions Wisely

Docker build uses a cache: if the content of a layer and all previous layers hasn't changed, Docker reuses the cache instead of re-executing the command. Place the least frequently changed instructions at the top and the most frequently changed at the bottom.

- Installing dependencies (which rarely change) should be done before copying source code (which changes often).

Example for a Node.js app:

# LESS OPTIMAL - Every code change triggers `npm install`

COPY . /app

WORKDIR /app

RUN npm install

CMD ["node", "server.js"]

# OPTIMAL - Cache `npm install`

WORKDIR /app

# 1. Copy dependency definition files

COPY package*.json ./

# 2. Install dependencies. This layer is cached if package.json doesn't change

RUN npm install

# 3. Copy source code (changes frequently)

COPY . .

CMD ["node", "server.js"]

Use .dockerignore Effectively

The .dockerignore file works like .gitignore, letting you exclude unnecessary files and folders from the build context (the content sent to the Docker daemon). This not only reduces build context size but also prevents unnecessary cache invalidation.

Include things like:

node_modules,vendor,targetdirectories- Log files, temp files

.git,.vscode,.ideafoldersDockerfile,.dockerignore(itself)

Leverage Cache Mounts (BuildKit)

BuildKit is Docker's next-gen build backend, offering powerful features. One is cache mounts, which let you share cache between builds without affecting image layers. This is especially useful for package managers.

Example with npm:

# syntax=docker/dockerfile:1

...

RUN --mount=type=cache,target=/root/.npm \

npm install

This mounts a cache directory at /root/.npm during build. The cache is saved and reused in future builds, even if package.json changes partially, making npm install much faster.

3. Enhance Security and Maintainability

An optimized Dockerfile is not just small and fast, but also secure and easy to manage.

Run Containers as Non-Root Users

By default, containers run as root, which is a major security risk. If an attacker gains control, they have full root access inside the container. Always create and switch to a non-privileged user.

FROM alpine:latest

# ... other commands

# Create a non-privileged group and user

RUN addgroup -S appgroup && adduser -S appuser -G appgroup

# Switch to the new user

USER appuser

# ... subsequent commands run as 'appuser'

CMD ["./my-app"]

Use COPY Instead of ADD

Both commands copy files into the image, but ADD has extra features like auto-extracting tar files and supporting URLs. These can lead to unexpected behavior and security risks (e.g., Zip Slip attacks). Always prefer COPY unless you specifically need ADD's features.

Use ARG and ENV Variables Wisely

ARG: Variables available only during build. Useful for build-time parameters (e.g., tool versions).ARGdoes not persist in the running container.ENV: Environment variables that persist in the running container. Used to configure your app.

Note: Never put sensitive info (passwords, API keys) directly in ARG or ENV. Use Docker secrets or your orchestration platform's secret management (e.g., Kubernetes Secrets).

Scan for Vulnerabilities

Use tools like Docker Scout, Trivy, or Snyk to automatically scan your images for known vulnerabilities in system packages and app dependencies. Integrate this step into your CI/CD pipeline for best security practices.

Conclusion: Optimization is a Journey

Optimizing Dockerfiles is not a one-time job, but a continuous improvement process. By applying the advanced techniques above—from choosing minimal base images, using multi-stage builds, smart instruction ordering, to enhancing security—you'll not only create technically superior Docker images, but also contribute to a more professional, faster, and safer software development and deployment process.

Start applying these today and turn your Dockerfiles into true works of art!

![[Advanced Docker] Protect Your Application: Security Best Practices in Docker](/images/blog/security-in-docker.webp)

![[Advanced Docker] Kubernetes: When Do You Really Need This "Giant"?](/images/blog/when-to-use-kubernetes.webp)

![[Docker Basics] Docker Networking: A Guide to Container Connectivity](/images/blog/learn-about-docker-networking.webp)

![[Advanced Docker] What is Container Orchestration? Discover Its Role & Management Tools](/images/blog/what-is-container-orchestration.webp)