If you are a developer, whether you are new or experienced, you have probably heard the classic phrase: "But it works on my machine!" This problem occurs when your code runs perfectly on your personal computer but throws unexpected errors when deployed on a colleague’s machine, a staging server, or in production.

This is the nightmare that Docker was born to solve.

Docker is not just a tool; it is a revolution in how we build, ship, and run applications. Let’s explore what this "blue whale" really is and why it is so powerful. 🐳

Understanding Docker with a Simple Example: The Shipping Container 📦

Imagine you need to ship a very complex item, like a high-end audio system, from Vietnam to the US. This system includes speakers, amplifiers, CD players, and lots of tangled cables.

-

Traditional way (Before Docker): You would have to disassemble everything, pack each part carefully, label them, and hope that the recipient in the US knows how to reassemble it exactly as before. This process is risky: you might miss a screw, connect the wrong cable, or a part might not be compatible with the US power supply. This is similar to deploying applications the old way—differences between the sender’s (dev) and receiver’s (server) environments lead to errors.

-

Modern way (Using Docker): Instead of disassembling, you put the entire assembled and working audio system into a standard shipping container. Inside this container, everything it needs is included: its own power system, racks, cables, etc. The recipient in the US just needs to plug in a single power source to the container and can use the audio system immediately, without worrying about what’s inside or how to assemble it.

That standard shipping container is the Docker Container. It "packages" your application along with everything it needs to run—libraries, environment, config files, environment variables—into a single, independent unit that can run anywhere.

Technically speaking: Docker is an open platform for developing, shipping, and running applications as containers. It allows you to separate your applications from your infrastructure, making software deployment fast and consistent.

Docker vs. Virtual Machine (VM): The Classic Showdown

Many people confuse Docker Containers with Virtual Machines (VMs). While both provide isolated environments, they work in completely different ways.

| Criteria | Virtual Machine (VM) | Docker Container |

|---|---|---|

| Architecture | Virtualizes hardware. Each VM runs a full guest OS on top of the host OS. | Virtualizes the OS layer. Containers share the host OS kernel. |

| Resources | Heavyweight. Each VM consumes a lot of RAM, CPU, and disk for its own OS. | Lightweight. Only packages the app’s code and libraries. Uses much less resources. |

| Startup Time | Slow (minutes), similar to booting a full computer. | Fast (seconds or even milliseconds), since there’s no OS to boot. |

| Performance | Lower, due to hardware and guest OS virtualization overhead. | Near-native performance, as it runs directly on the host kernel. |

| Portability | Less flexible, VM images are large (several GBs). | Extremely portable, images are usually just tens or hundreds of MBs. |

In short: If a VM is like renting an entire house (with living room, kitchen, bathroom even if you only need a bed), then a Docker Container is like renting a smart studio apartment—fully equipped inside but sharing infrastructure (electricity, water) with the building.

Core Components of Docker 📜

To understand how Docker works, you need to know these 4 main concepts:

-

Dockerfile:

- What is it? A text file containing step-by-step instructions to build a Docker Image.

- Example: Like a recipe. It specifies the "ingredients" (e.g., Node.js 18), where to "copy" the code from, and how the "dish" will be "served" (e.g., run

node app.js).

-

Docker Image:

- What is it? A read-only template created from a Dockerfile. It contains everything needed to run the app: code, runtime, libraries, environment variables.

- Example: If the Dockerfile is the recipe, the Image is a pre-mixed spice box made from that recipe. You can use this spice box to "cook" many identical "dishes".

-

Docker Container:

- What is it? A running instance of a Docker Image. This is the isolated environment where your app lives.

- Example: This is the cooked dish made from the spice box (Image). From one Image, you can create many identical Containers.

-

Docker Hub / Registry:

- What is it? A repository for Docker Images. Docker Hub is the largest public registry (like GitHub for code).

- Example: Like a huge recipe library (Docker Hub), where you can find and download ready-made "spice boxes" (Images) for thousands of apps (Node.js, Python, MongoDB, etc.) or upload your own to share.

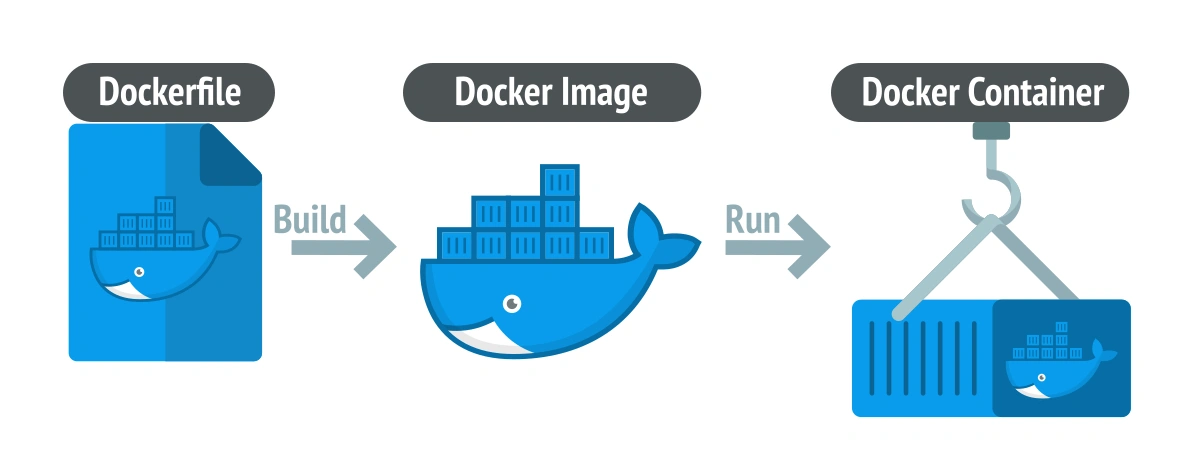

Workflow overview:

- You write a

Dockerfile - Use

docker buildto create anImage - Use

docker runto run thatImageand create aContainer - If you want, you can push your

ImagetoDocker Hubfor others to use.

Why is Docker so important? Unmissable Benefits 🚀

Here are the top "huge" benefits of Docker:

- Consistency across environments: Completely solves the "It works on my machine" problem. Development, testing, and production environments are identical, minimizing unexpected errors.

- Speed and efficiency: Containers start almost instantly, speeding up build, test, and deployment processes.

- Resource optimization: Running multiple containers on the same server is much more efficient than running multiple VMs, saving hardware costs.

- Isolation and security: Containers are isolated from each other and from the host system. If one container fails, it doesn’t affect others.

- Supports Microservices architecture: Docker is the perfect platform for building and managing microservices-based applications. Each service can be packaged and deployed independently in its own container.

- Huge community: Docker has a massive user community and ecosystem (Docker Compose, Kubernetes, Portainer, etc.).

Who should use Docker?

The short answer: almost everyone in software development.

- Developers: Build clean development environments identical to production.

- DevOps engineers: Automate build, test, and deployment pipelines (CI/CD).

- System administrators: Easily manage, monitor, and scale applications.

Conclusion: Knowing Docker is an essential skill

Docker is no longer a "trend" but an essential skill in the modern tech world. It has fundamentally changed how we think about developing and operating software. By "packaging" the complex world of applications into simple, standard containers, Docker has ushered in a new era of speed, efficiency, and stability.

If you haven’t tried it yet, start today. Learning Docker could be one of the best investments for your programming career.

![[Docker Basics] What is Docker Compose? Importance and Effective Usage](/images/blog/what-is-docker-compose.webp)

![[Docker Basics] Docker Volumes: How to Store and Manage Data](/images/blog/understanding-docker-volumes.webp)

![[Docker Basics] How to Write an Optimal Dockerfile for Beginners](/images/blog/how-to-write-dockerfile-thumbnail.webp)

![[Docker Basics] Essential Commands to Run Docker Containers](/images/blog/how-to-run-docker-container-thumbnail.webp)